Executive Summary: Market researchers can validate AI to protect data integrity and insight quality by embedding structured validation into everyday workflows, combining benchmarking against trusted data, prompt testing, continuous monitoring for drift and consistent human oversight. As AI adoption accelerates across research processes—from summarization to synthetic data simulation—validation becomes an operational discipline, not a one-time check. By balancing automated efficiency with expert review, organizations can ensure AI-generated insights remain accurate, nuanced and decision-ready while scaling innovation responsibly.

1. The AI Validation Gap in Market Research Workflows

Artificial intelligence is rapidly becoming part of the everyday workflow for market research teams. Across global organizations, AI now summarizes open-ended responses, generates topline reports, drafts insights, and even simulates customer reactions through synthetic audiences. The benefits are clear: faster turnaround, lower costs and the ability to process more information than human analysts alone ever could.

But as adoption accelerates, an important imbalance is emerging. The companies building AI invest heavily in validating it. The companies using AI often do not.

That gap matters—especially in market research, where small interpretive errors can quietly shape high-stakes business decisions.

Leading AI developers such as OpenAI, Google DeepMind, Anthropic, and Meta dedicate enormous resources to evaluation. Before major models are released, they undergo months of stress testing. Researchers probe for failure points with adversarial prompts. Large human review panels score outputs. Automated systems run thousands of benchmark tests designed to surface hallucinations, bias, reasoning gaps and edge-case instability.

Validation at that level isn’t a side effort. Industry analysts estimate frontier AI labs devote roughly 10–20% of development budgets to testing and evaluation—often translating to tens of millions of dollars before a model ever reaches users.

Yet once those same models enter enterprise environments, the intensity of scrutiny often drops. Many organizations rely on limited pilots or informal team testing before embedding AI into workflows. The assumption is understandable: if the technology provider validated the model so rigorously, it should perform reliably in practice.

But that logic misses a critical distinction: Model developers validate general capability. Enterprises must validate specific application.

2. Why Enterprise AI Validation Is Operational Discipline, Not Optional

That distinction is especially important in market research. Research outputs are rarely binary or purely factual. They require interpretation, synthesis and contextual judgment. An AI system doesn’t need to generate an obvious error to create risk. It only needs to be subtly wrong in ways that are difficult to detect.

Most researchers experimenting with AI in research workflows have seen this firsthand:

- A model summarizes hundreds of qualitative responses and unintentionally overemphasizes one theme while muting another.

- It offers a polished explanation for a pattern that isn’t fully supported by the data.

- It smooths weak signals in concept testing, producing conclusions that sound decisive but lack appropriate caution.

Because AI outputs are articulate and confident, they can be highly persuasive. That makes them easy to accept—and harder to challenge.

The creator validates safety in general terms. The practitioner validates use in a specific situation. AI demands the same mindset. Models are flexible systems. Their behavior shifts depending on prompts, data inputs, workflows and context. A model that performs exceptionally on broad benchmarks may behave differently when asked to interpret brand perceptions in a niche market or synthesize culturally specific qualitative feedback.

That’s why enterprise-level AI validation isn’t optional. It’s operational discipline.

For research teams, this doesn’t mean replicating the massive safety infrastructures of AI labs. It means building pragmatic validation into everyday practice.

- Some organizations are developing internal benchmark datasets—previous surveys, coded qualitative studies, validated analyses—to compare AI outputs against known standards.

- Others are implementing checks for unsupported claims or statistical inconsistencies in generated summaries.

- Many teams are discovering that small prompt variations can produce meaningfully different conclusions, underscoring the importance of testing prompt stability before embedding AI into automated research workflows.

Most importantly, experienced researchers must remain closely involved. Human expertise is still the most reliable safeguard against lost nuance, overstated certainty, and conclusions that sound right but don’t fully align with the evidence.

Validation also isn’t a one-time exercise. AI systems evolve continuously. Models are updated. Prompts are refined. New data sources enter pipelines. A workflow that performed well last quarter can drift quietly over time.

The strategic risk isn’t dramatic failure. Obvious errors are usually caught. The greater threat is gradual distortion.

If AI-generated outputs consistently emphasize certain narratives, mute uncertainty or introduce subtle bias, they can steadily influence how leaders understand customers and markets. Over time, those small shifts shape strategy, investment decisions, innovation priorities and brand direction. When market research informs product development, pricing, positioning and experience design, even minor distortions carry consequences.

A practical rule of thumb: allocate a meaningful share of AI initiatives—often 10–20% of effort and resources—to validation and monitoring. Not coincidentally, that mirrors the proportion leading AI developers dedicate to evaluation. The people building these systems understand their complexity. Responsible enterprise users should too.

3. Responsible AI in Research: Human Oversight, Synthetic Data and Trustworthy Insights

Market research has always been about separating signal from noise. As AI becomes more embedded in the toolkit, that mission doesn’t change. The discipline required to uphold it does.

AI should handle what it does best—speed, scale and repetitive processing. Humans must stay accountable for accuracy, data integrity and judgment.

That principle becomes even more important as new methods emerge, including synthetic data and simulated audiences. These approaches offer powerful ways to model scenarios, protect privacy and accelerate learning—but they also raise new validation questions.

- How closely do synthetic populations reflect real behaviors?

- Where are the boundaries between simulation and inference?

- How should synthetic data outputs be verified against observed data?

Using these tools responsibly means applying the same rigor: validate inputs, test assumptions, compare outputs to reality and maintain human oversight where interpretation matters most.

AI Validation Is an Everyday Discipline

The credibility of market research depends on the choices organizations make now: data integrity over convenience, validation over velocity, responsibility over shortcuts.

Organizations that embed validation into their AI workflows will build insight leaders can trust. Those that prioritize speed alone may move fast, but on less certain ground.

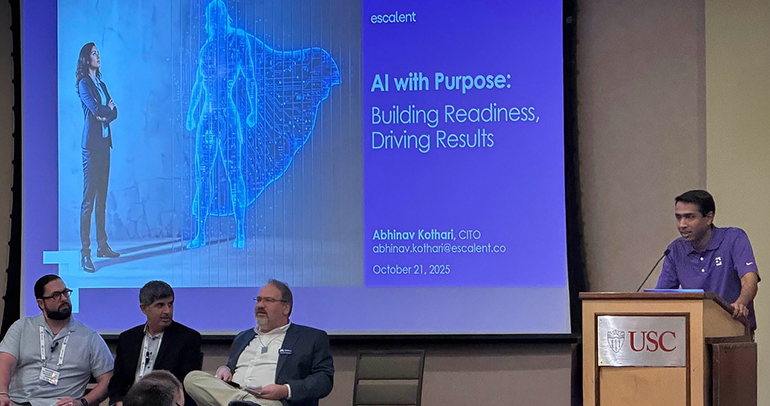

At Escalent, we help brands operationalize human-guided AI in market research—combining technological acceleration with disciplined validation so insights remain accurate, defensible and decision-ready. As an AI-enabled market research and advisory partner, Escalent works with research leaders to ensure AI strengthens—not shortcuts—the integrity of evidence and strategy.

Because in the end, better tools only create better outcomes when they’re used responsibly.

If you want a deeper dive, watch “Synthetic Data Without the Hype,” Escalent’s on-demand webinar hosted by Chris Barnes and Dyna Boen where they share practical guidance based on what we’ve learned from training our teams and working with F100 clients.

FAQs

Q: How can market researchers validate AI outputs without slowing innovation?

A disciplined validation framework doesn’t require massive infrastructure. Teams can benchmark AI outputs against prior studies, test prompt stability, implement checks for unsupported claims and keep experienced researchers in the review loop.

Q: Why is human validation still necessary if AI models are already tested?

Model providers validate general performance. Enterprises must validate real-world use cases. Context, data inputs, and workflows change how models behave—making human oversight essential for accurate, decision-ready insights.